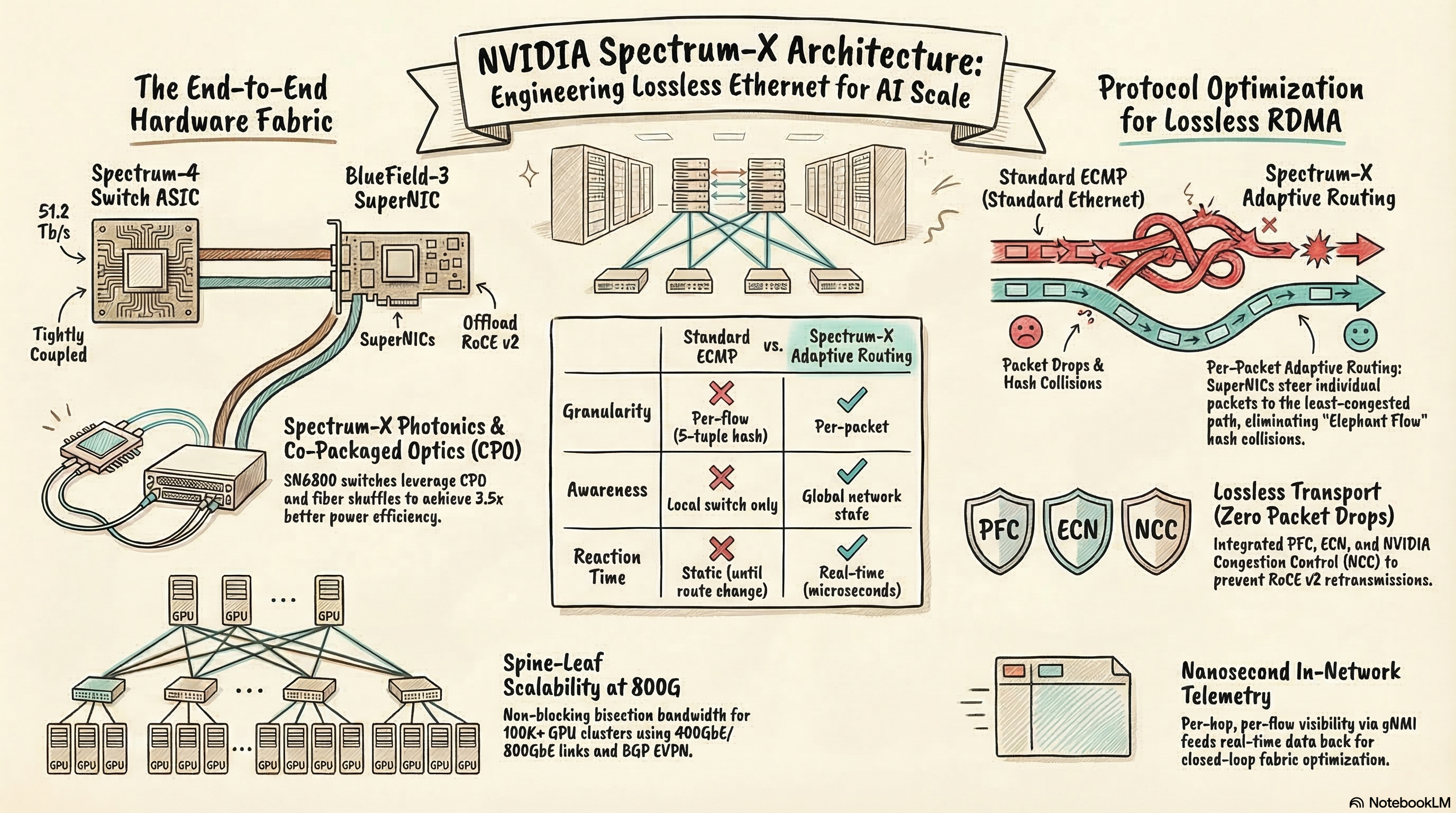

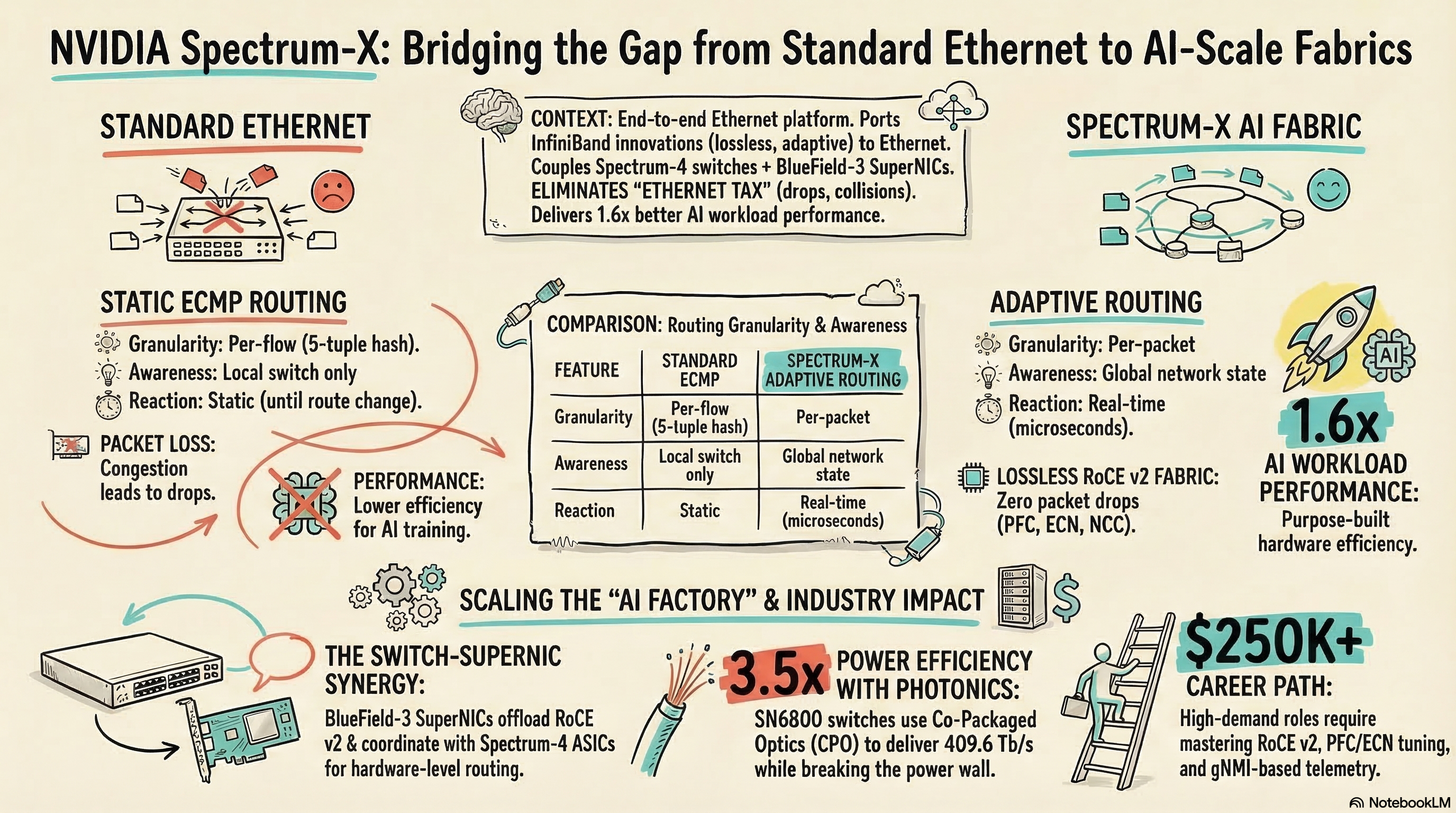

NVIDIA Spectrum-X is the platform that proved Ethernet can compete with InfiniBand for AI training workloads — and it’s winning. By tightly coupling Spectrum-4 switch ASICs with BlueField-3 SuperNICs, Spectrum-X achieves 1.6x better AI workload performance than off-the-shelf Ethernet while maintaining the cost, ecosystem, and operational advantages that made Ethernet the standard for everything else in the data center.

Key Takeaway: Spectrum-X is not faster Ethernet — it’s a fundamentally different architecture that ports three InfiniBand innovations (lossless transport, adaptive routing, in-network telemetry) to Ethernet, and network engineers who understand these mechanisms will design the AI fabrics of the next decade.

What Makes Spectrum-X Different from Standard Ethernet?

Standard Ethernet was designed for general-purpose networking — oversubscription is expected, packet drops are handled by TCP retransmission, and ECMP distributes traffic based on flow hashing. This works fine for web servers and databases. It’s catastrophic for AI training.

According to NVIDIA Developer (2026), Spectrum-X was “specifically designed as an end-to-end architecture to optimize AI workloads” using three innovations ported from InfiniBand:

Innovation 1: Lossless Ethernet (Zero Packet Drops)

AI training uses RDMA over Converged Ethernet (RoCE v2) for GPU-to-GPU communication. RoCE requires a lossless network — any packet drop triggers expensive retransmission that cascades across the entire training job because all GPUs must synchronize.

Standard Ethernet handles congestion by dropping packets. Spectrum-X implements:

- Priority Flow Control (PFC) — pauses the sender before buffer overflow

- Explicit Congestion Notification (ECN) — signals congestion before drops occur

- NVIDIA Congestion Control (NCC) — a proprietary algorithm that reacts faster than standard DCQCN

The result: zero packet drops under congestion, even at 100K+ GPU scale. According to SDxCentral’s architecture review, NVIDIA took “lossless networking to eliminate retransmission delays” directly from InfiniBand and applied it to Ethernet.

Innovation 2: Adaptive Routing (Beyond ECMP)

Traditional ECMP (Equal-Cost Multi-Path) hashes flows to paths based on header fields. The problem: AI training generates elephant flows — massive, sustained data transfers between GPU pairs that can saturate a single path while adjacent paths sit idle.

According to NVIDIA Developer (2026), “conventional IP routing protocols, such as ECMP, struggle to handle the large, sustained data flows that AI models generate.”

Spectrum-X adaptive routing works differently:

| Feature | Standard ECMP | Spectrum-X Adaptive Routing |

|---|---|---|

| Granularity | Per-flow (5-tuple hash) | Per-packet |

| Awareness | Local switch only | Global network state |

| Reaction time | Static (until route change) | Real-time (microseconds) |

| Elephant flow handling | Hash collision → congestion | Spread across all paths |

The Spectrum-4 switch and BlueField-3 SuperNIC work in concert — the switch monitors all paths in real-time and the SuperNIC steers individual packets to the least-congested path. This requires tight hardware coupling that can’t be replicated with off-the-shelf switches and standard NICs.

Innovation 3: In-Network Telemetry

Spectrum-X provides per-flow, per-hop telemetry at nanosecond granularity. According to NVIDIA Developer, this “high-frequency telemetry and advanced monitoring provide real-time granular visibility into AI data center networks.”

Traditional SNMP polling gives you 5-minute averages. Spectrum-X telemetry gives you per-packet latency measurements, real-time congestion maps, and per-flow path traces. This isn’t just monitoring — it feeds back into the adaptive routing system for closed-loop optimization.

How Does the Spectrum-X Architecture Actually Work?

The Two-Component System

Spectrum-X is an end-to-end system, not just a switch:

Spectrum-4 Switch ASIC:

- 51.2 Tb/s switching capacity

- 128 ports of 400GbE or 64 ports of 800GbE

- Hardware adaptive routing engine

- In-network computing capabilities

- Runs Cumulus Linux or NVIDIA DOCA OS

BlueField-3 SuperNIC:

- 400Gbps network connectivity

- Hardware RoCE v2 offload

- Congestion control offload (PFC, ECN, NCC)

- Endpoint adaptive routing coordination

- Crypto offload for multi-tenant isolation

According to WEKA’s platform analysis, “Spectrum-4 switches form the backbone of the network, optimizing data paths and load-balancing traffic using adaptive routing” while “BlueField-3 SuperNICs offload networking and security tasks from the host CPU.”

The critical design point: the SuperNIC is not optional. Standard NICs can connect to Spectrum-4 switches, but you lose the adaptive routing coordination and advanced congestion control that delivers the 1.6x performance gain. The system optimization comes from the switch-NIC coupling.

Spine-Leaf Topology at AI Scale

Spectrum-X deploys in a standard spine-leaf topology, but the scale is extreme:

[Spine Layer - Spectrum-4 SN5600]

/ | | | | \

/ | | | | \

[Leaf - SN5600] [Leaf] [Leaf] [Leaf] [Leaf] [Leaf]

| | | | | | | |

GPU GPU GPU GPU GPU GPU GPU GPU

(BlueField-3 SuperNIC in each server)

At 100K GPU scale, this fabric requires:

- ~3,000+ leaf switches

- ~200+ spine switches

- Every link at 400G or 800G

- Non-blocking bisection bandwidth

According to NVIDIA’s investor announcement (2025), Spectrum-XGS extends this to connect distributed data centers into giga-scale AI super-factories — multi-site fabrics spanning multiple buildings or campuses.

How Does Spectrum-X Compare to InfiniBand?

We covered the protocol-level comparison in our RoCE vs InfiniBand deep dive. Here’s how Spectrum-X specifically stacks up:

| Dimension | InfiniBand (Quantum-X) | Spectrum-X (Ethernet) |

|---|---|---|

| Raw performance | Best-in-class | 1.6x over OTS Ethernet (approaching IB) |

| Cost per port | Higher | 30-50% lower |

| Multi-tenant support | Limited | Native (VLAN, VRF, ACL) |

| Vendor ecosystem | NVIDIA only | Multiple switch vendors |

| Management tools | UFM (NVIDIA proprietary) | Standard Ethernet tools + Cumulus |

| Interop with existing DC | Separate fabric | Unified Ethernet fabric |

| Adaptive routing | Yes (native) | Yes (ported from IB) |

| GPUs supported | Millions (Quantum-X800) | Millions (Spectrum-X Photonics) |

The trend is clear: hyperscalers are choosing Ethernet. As we reported in our Meta $135B AI buildout analysis, Meta selected Spectrum-X Ethernet for its massive AI infrastructure — the largest single commitment to Ethernet-based AI networking.

Microsoft, xAI, and CoreWeave have also deployed or announced Spectrum-X Ethernet fabrics. InfiniBand remains strong for the most latency-sensitive HPC workloads, but the market is tilting decisively toward Ethernet for AI.

What Is Spectrum-X Photonics?

According to NVIDIA’s announcement (2025), Spectrum-X Photonics uses co-packaged optics (CPO) to integrate optical engines directly on the switch ASIC package. This is the same silicon photonics technology we covered in our STMicro PIC100 analysis.

The flagship product is the SN6800 — a quad-ASIC switch delivering:

- 409.6 Tb/s total bandwidth in a single chassis

- Integrated fiber shuffle mechanism for flat GPU cluster scaling

- 3.5x power efficiency improvement over legacy optical interconnects

- 10x greater resiliency through integrated redundancy

According to financial analysis (February 2026), Spectrum-X Photonics is “effectively dismantling the ‘Power Wall’ that has threatened to stall the growth of AI Factories.”

What Skills Do Network Engineers Need for Spectrum-X?

Spectrum-X runs on Ethernet — the protocol you already know. But AI-scale Ethernet requires skills beyond traditional switching:

Must-Have Skills

| Skill | Why It Matters | Learning Path |

|---|---|---|

| RoCE v2 | GPU-to-GPU RDMA transport | NVIDIA DOCA documentation |

| PFC configuration | Lossless Ethernet requires per-priority flow control | CCIE DC QoS topics |

| ECN/DCQCN tuning | Congestion control without drops | NVIDIA deployment guides |

| Spine-leaf at 400G/800G | AI fabric topology | CCIE DC fundamentals |

| BGP EVPN | Overlay for multi-tenant AI clouds | CCIE DC blueprint |

| Telemetry (gNMI) | AI fabric monitoring at scale | CCIE Automation topics |

The CCIE Connection

Every skill in the table above maps to existing CCIE blueprint topics:

- CCIE Data Center — VXLAN EVPN, spine-leaf design, NX-OS QoS

- CCIE Enterprise — QoS frameworks, PFC, ECN

- CCIE Automation — gNMI telemetry, streaming monitoring, Python scripts

The engineers being hired for Spectrum-X deployments aren’t coming from a new discipline — they’re CCIE-level network engineers who added RoCE and lossless Ethernet to their existing skill set.

According to salary data aggregated across LinkedIn and Glassdoor (2026), AI infrastructure network engineers at hyperscalers earn $180K-$250K+, with the premium going to those who can configure and troubleshoot lossless Ethernet fabrics at scale.

Frequently Asked Questions

What is NVIDIA Spectrum-X and how is it different from standard Ethernet?

Spectrum-X is NVIDIA’s purpose-built Ethernet networking platform for AI workloads. It combines Spectrum-4 switch ASICs with BlueField-3 SuperNICs to deliver lossless networking, adaptive routing, and advanced congestion control — achieving 1.6x better AI performance than off-the-shelf Ethernet.

Why are hyperscalers choosing Spectrum-X Ethernet over InfiniBand?

Ethernet offers lower cost per port, broader vendor ecosystem, multi-tenant isolation, and familiar management tools. Spectrum-X closes the performance gap with InfiniBand by eliminating the “Ethernet tax” — packet drops, ECMP hash collisions, and head-of-line blocking.

What is a BlueField-3 SuperNIC?

A SuperNIC is a specialized network adapter that offloads RoCE v2 transport, congestion control, and adaptive routing from the host CPU. Unlike standard NICs, a SuperNIC works in concert with the Spectrum-4 switch to make packet-level routing decisions based on real-time network state.

What networking skills do engineers need for Spectrum-X deployments?

RoCE v2 configuration, Priority Flow Control and ECN tuning, lossless Ethernet design, spine-leaf fabric architecture at 400G/800G, and telemetry with gNMI. These are extensions of traditional CCIE DC and Enterprise skills.

How does Spectrum-X Photonics scale to millions of GPUs?

Spectrum-X Photonics uses co-packaged optics to integrate optical engines directly on the switch ASIC package. The quad-ASIC SN6800 switch delivers 409.6 Tb/s total bandwidth with 3.5x better power efficiency than legacy optical interconnects.

Ethernet won the AI networking war — not because it was always the best protocol for the job, but because NVIDIA invested the engineering effort to close the gap with InfiniBand while preserving Ethernet’s cost and ecosystem advantages. Network engineers who understand lossless Ethernet, adaptive routing, and RoCE at scale are building the fabrics that train the next generation of AI models.

Ready to fast-track your CCIE journey? Contact us on Telegram @phil66xx for a free assessment.