IBM closed its $11.4 billion acquisition of Confluent on March 17, 2026, making it the largest data infrastructure deal in recent memory and putting the Apache Kafka company at the center of IBM’s enterprise AI and hybrid cloud strategy. For network engineers, this isn’t just a Wall Street headline — Confluent’s streaming platform is the infrastructure layer that powers real-time network telemetry, AIOps pipelines, and the event-driven architectures that make intent-based networking actually work.

Key Takeaway: If you’re building network observability or automation pipelines, Kafka-based streaming is about to become as fundamental as SNMP — and IBM just made a $11.4 billion bet that proves it.

What Is Confluent and Why Should Network Engineers Care?

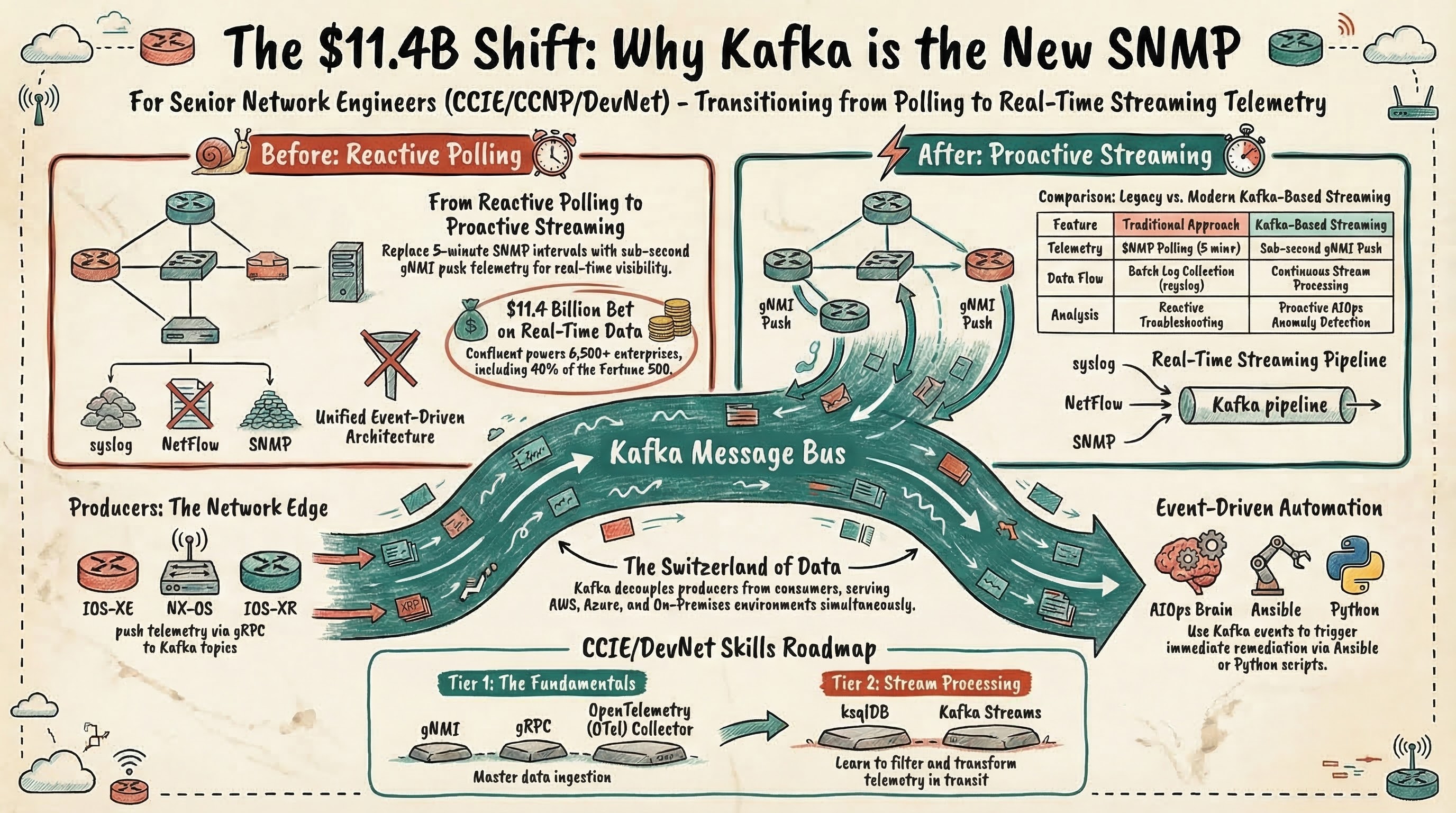

Confluent is the enterprise platform built on Apache Kafka, the open-source distributed event streaming system originally developed at LinkedIn. According to IBM’s press release (2026), Confluent serves more than 6,500 enterprises, including 40% of the Fortune 500, handling real-time data pipelines for financial services, healthcare, manufacturing, and retail.

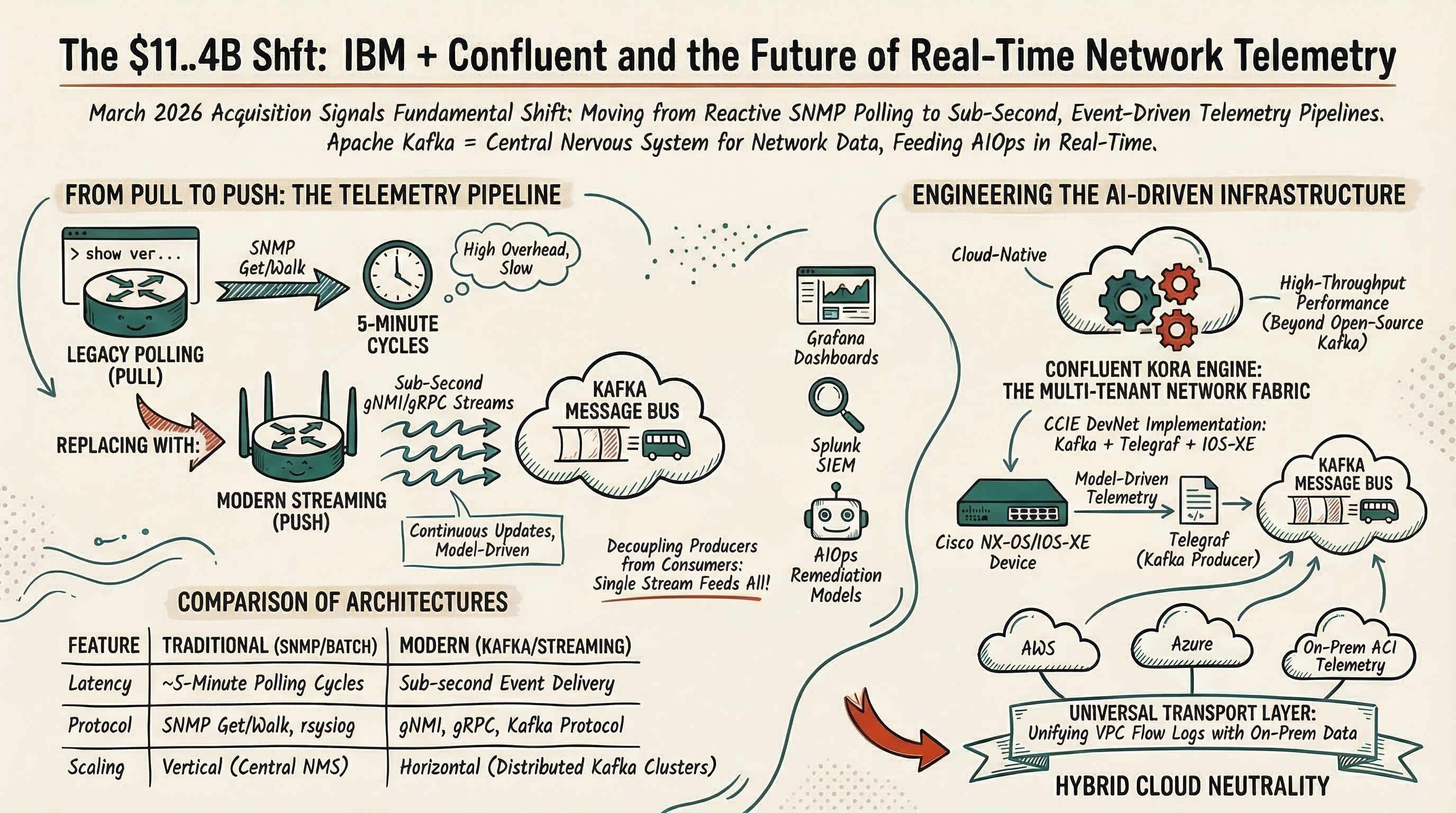

In plain networking terms, think of Kafka as a massively scalable message bus. Instead of your monitoring system polling devices every 5 minutes via SNMP, Kafka enables a publish-subscribe model where network devices, controllers, and applications continuously push events — syslog messages, gNMI telemetry updates, NetFlow records, BGP state changes — into topics that any downstream consumer can process in real time.

Here’s why that matters for your daily work:

| Traditional Approach | Kafka-Based Streaming |

|---|---|

| SNMP polling every 5 min | Sub-second gNMI push telemetry |

| Batch log collection via rsyslog | Continuous syslog streaming to Kafka topics |

| Periodic NetFlow exports | Real-time flow analysis with stream processing |

| Manual correlation across tools | Unified event pipeline feeding all consumers |

| Reactive troubleshooting | Proactive anomaly detection via AIOps |

According to WWT’s technical guide on modernizing network observability (2026), Apache Kafka serves as “the event streaming backbone” in modern telemetry architectures, sitting between network collectors and observability platforms like Grafana, Splunk, and Elastic.

Why IBM Paid $11.4 Billion: The Real-Time Data Imperative

The price tag makes sense when you understand what IBM is really buying. According to Futurum Group analysts Brad Shimmin and Mitch Ashley (2025), IBM’s acquisition is “a massive, declarative bet that the central challenge for enterprise AI has shifted from data at rest to data in motion.”

Five strategic reasons drive the deal:

- Real-time data fabric for AI: Generative AI, agentic systems, and modern analytics depend on streaming, fresh, contextual data — not yesterday’s database snapshot

- AI agent infrastructure: Confluent becomes the event backbone for AI agents, AIOps, and automated decision-making across the enterprise

- watsonx integration: Confluent fills the streaming ingestion gap in IBM’s watsonx AI platform, which previously relied on batch ETL pipelines

- Hybrid cloud neutrality: Confluent runs identically on AWS, Azure, GCP, and on-premises — what Futurum calls “the Switzerland of data streaming”

- Observability and telemetry: High-volume pipeline for streaming infrastructure telemetry to monitoring platforms and AI-driven analysis

As Rob Thomas, IBM’s SVP of Software, put it in the official announcement: “Transactions happen in milliseconds, and AI decisions need to happen just as fast. With Confluent, we are giving clients the ability to move trusted data continuously across their entire operation.”

The deal also includes Confluent’s proprietary Kora engine — a cloud-native, multi-tenant streaming platform rebuilt from the ground up that delivers significant performance improvements over vanilla open-source Kafka. According to Futurum (2025), this engineering moat is what separates Confluent from “good enough” managed Kafka services from the hyperscalers.

How Kafka Powers Network Telemetry Pipelines

If you’ve configured model-driven telemetry on IOS-XE or NX-OS, you’ve already touched one piece of this architecture. Here’s how the full stack works in a modern network observability pipeline:

Network Devices (gNMI/SNMP/syslog/NetFlow)

│

▼

Telemetry Collectors (Telegraf, OpenTelemetry Collector)

│

▼

Apache Kafka Cluster (event streaming backbone)

│

┌────┼────┬────────┐

▼ ▼ ▼ ▼

Grafana Splunk AIOps Custom

(viz) (SIEM) (ML/AI) (automation)

Each Kafka topic holds a specific telemetry stream — one for interface counters, another for BGP neighbor state changes, another for syslog events. Consumers subscribe to the topics they need. The beauty is decoupling: your BGP anomaly detection model consumes the same raw data as your Grafana dashboards, but processes it independently.

A practical example from a data center running Cisco Nexus 9000 switches:

# gNMI subscription pushing interface stats every 10 seconds

gnmic subscribe \

--address nexus-spine01:50051 \

--path "/interfaces/interface/state/counters" \

--stream-mode sample \

--sample-interval 10s \

--output kafka \

--kafka-address kafka-broker:9092 \

--kafka-topic network-interface-counters

This single pipeline replaces the old model of configuring separate SNMP polling intervals, syslog servers, and NetFlow collectors — each with their own transport, format, and failure modes. According to the WWT observability guide (2026), this architecture handles “massive spikes in data throughput” that would overwhelm traditional polling-based systems.

What This Means for AIOps and Intent-Based Networking

IBM’s bet isn’t just about telemetry collection. The real play is feeding AI agents with live operational data. According to IDC research cited in IBM’s press release (2026), more than one billion new logical applications will emerge by 2028, driven by AI that “will only deliver value if the data behind it is live, trusted, and continuously flowing.”

For network engineers, this translates to three concrete shifts:

1. From reactive monitoring to predictive operations. Traditional NMS tools detect problems after they happen. Kafka-backed AIOps platforms process telemetry streams through ML models in real time, catching anomalies — a BGP flap pattern, an unusual traffic spike, a gradual increase in interface errors — before they impact services.

2. From manual remediation to event-driven automation. When Kafka delivers a “link-down” event, an automation consumer can immediately trigger a playbook: reroute traffic, open a ticket, notify the NOC — all within seconds instead of waiting for the next polling cycle.

3. From siloed tools to unified observability. Kafka acts as the single source of truth. Your security team’s SIEM, your NOC’s dashboards, and your automation platform all consume the same stream. No more reconciling discrepancies between what Splunk shows and what Grafana displays.

This is the infrastructure layer that makes AI-driven network automation practical at enterprise scale. Without real-time streaming, “intent-based networking” is just marketing — the AI has no live context to act on.

The Hybrid Cloud Angle: Why Network Teams Should Pay Attention

Confluent’s cross-cloud neutrality is particularly relevant for network engineers managing hybrid environments. According to Futurum’s analysis (2025), Confluent “runs identically on AWS, Azure, Google Cloud, and on-premises data centers,” functioning as a universal data transport layer.

Consider a common enterprise scenario: you have Cisco ACI in your on-premises data center, SD-WAN connecting branch offices, and workloads in AWS and Azure. Today, telemetry from each environment lives in separate silos with different collection mechanisms. With a Confluent-based streaming fabric:

- On-premises ACI telemetry flows to the same Kafka cluster as AWS VPC Flow Logs

- SD-WAN analytics from vManage feed the same pipeline as Azure Network Watcher

- A single AIOps platform correlates events across all environments in real time

This is what IBM means by a “smart data platform.” Day-one integrations announced with the acquisition include IBM watsonx.data, IBM MQ, IBM webMethods, and — critically for infrastructure teams — IBM’s consulting arm helping clients “build the data foundation their AI needs.”

For engineers working in cloud networking roles, understanding how streaming platforms bridge on-premises and cloud environments is becoming a core competency.

Competitive Landscape: What Happens Next

IBM isn’t the only one betting on real-time streaming. According to market analysis from Futurum (2025), expect hyperscalers to “accelerate innovation and offer aggressive pricing for their native streaming services” in response:

| Provider | Native Streaming Service | Kafka Compatibility |

|---|---|---|

| AWS | Amazon MSK, Kinesis | MSK is managed Kafka; Kinesis is proprietary |

| Microsoft Azure | Event Hubs | Kafka protocol compatible |

| Google Cloud | Managed Kafka, Pub/Sub | Managed Kafka (GA); Pub/Sub is proprietary |

| IBM (+ Confluent) | Confluent Platform | Full Kafka + proprietary Kora engine |

The key differentiator IBM now holds: Confluent’s Kora engine is purpose-built for enterprise-grade streaming at scale, while hyperscaler offerings are “good enough” managed Kafka or proprietary alternatives that create lock-in. For network teams, this means more competition and better tooling across the board — regardless of which cloud provider you’re building on.

The acquisition also mirrors IBM’s 2024 HashiCorp purchase. Both Confluent (streaming) and HashiCorp (infrastructure-as-code with Terraform) sit at the center of the modern enterprise IT stack. For CCIE automation candidates, this signals where the industry is heading: infrastructure defined and managed through code, with real-time data pipelines connecting everything.

What Network Engineers Should Learn Next

If you’re an automation-focused engineer or CCIE DevNet candidate, here’s a practical learning roadmap based on what this acquisition signals:

Tier 1 — Immediate relevance:

- Streaming telemetry fundamentals: gNMI, gRPC, model-driven telemetry on IOS-XE/NX-OS/IOS-XR

- OpenTelemetry Collector: The vendor-neutral standard for collecting and exporting telemetry data

- Basic Kafka concepts: Topics, producers, consumers, consumer groups, partitions

Tier 2 — Building competency:

- Telegraf + Kafka integration: Configuring Telegraf as a Kafka producer for network device telemetry

- Stream processing basics: Kafka Streams or ksqlDB for filtering and transforming telemetry data

- Observability stack: Kafka → Grafana/Prometheus pipeline for network dashboards

Tier 3 — Advanced differentiation:

- Event-driven automation: Triggering Ansible playbooks or Python scripts from Kafka events

- AIOps integration: Feeding ML models with streaming telemetry for anomaly detection

- Confluent Platform: Schema Registry, Kafka Connect, and enterprise governance features

A lab environment with EVE-NG or CML, a single-node Kafka cluster (Docker Compose makes this trivial), and Telegraf collecting gNMI data from virtual routers gives you hands-on experience with the exact architecture IBM just invested $11.4 billion to own.

The Bigger Picture: Data in Motion Becomes Infrastructure

According to analyst Sanjeev Mohan of SanjMo, quoted in IBM’s press release (2026): “The shift from AI experimentation to production deployment has exposed a critical gap in enterprise data architecture: the inability to deliver trusted, real-time data to the systems that need it most.”

That gap is exactly what network engineers have been solving in a more limited way with streaming telemetry and model-driven programmability. The IBM-Confluent deal validates that this approach — continuous, event-driven data flow instead of periodic batch collection — is the future of all enterprise infrastructure, not just networking.

For the networking profession, the implications are clear: the line between “network engineer” and “data infrastructure engineer” continues to blur. The engineers who understand both sides — how packets traverse the network AND how telemetry data flows through streaming pipelines — will command the most valuable roles in the market.

As Jay Kreps, Confluent’s CEO and co-founder, stated (2026): “As enterprises move from experimenting with AI to running their business on it, helping data flow continuously across the business has never mattered more.”

He’s talking about the exact infrastructure you maintain every day. The question is whether you’re just generating the data or also architecting how it flows.

Frequently Asked Questions

What is Confluent and why did IBM acquire it?

Confluent is the company behind the enterprise version of Apache Kafka, the industry-standard platform for real-time data streaming. IBM acquired Confluent for $11.4 billion to build a unified data platform that feeds live, governed data to AI models and agents across hybrid cloud environments. The deal closed on March 17, 2026.

How does Apache Kafka relate to network engineering?

Kafka serves as the transport layer for streaming network telemetry data — syslog, SNMP traps, gNMI updates, NetFlow records — from network devices into observability platforms like Grafana and Splunk. It replaces batch-based polling with continuous event-driven data pipelines, enabling sub-second visibility into network state.

Will the IBM-Confluent deal affect Cisco networking environments?

Not directly, but it accelerates the industry shift toward streaming telemetry. Cisco’s own DNA Center, ThousandEyes, and Nexus Dashboard already use event-driven architectures internally. Engineers managing these platforms will increasingly interact with Kafka-based pipelines underneath, especially in hybrid cloud deployments.

Should CCIE candidates learn Apache Kafka?

Yes, especially DevNet and automation-track candidates. Understanding event-driven architectures, Kafka topics and consumers, and how streaming telemetry integrates with AIOps platforms is becoming essential for senior network roles that bridge traditional networking and modern data infrastructure.

What does real-time data streaming mean for AIOps in networking?

AIOps depends on continuous, fresh data to detect anomalies, predict failures, and trigger automated remediation. Kafka-based streaming replaces the old model of polling devices every 5 minutes with sub-second event delivery, enabling AI models to act on what’s happening now rather than stale snapshots.

Ready to fast-track your CCIE journey? Contact us on Telegram @firstpasslab for a free assessment.