Tenzai’s autonomous AI hacker outperformed 99% of 125,000 human competitors across six elite capture-the-flag hacking competitions in March 2026, completing multi-step exploit chains for an average cost of $12.92 per platform. This isn’t a research demo — it’s a production-grade offensive AI system built by Israeli intelligence veterans with $75 million in seed funding and a $330 million valuation, and it fundamentally changes the threat model that every network security engineer must defend against.

Key Takeaway: AI-driven offensive security has crossed the threshold from theoretical to operational — autonomous agents can now chain multiple exploits, bypass authentication, and escalate privileges faster and cheaper than most human penetration testers, making zero trust microsegmentation and AI-driven behavioral analytics mandatory rather than aspirational.

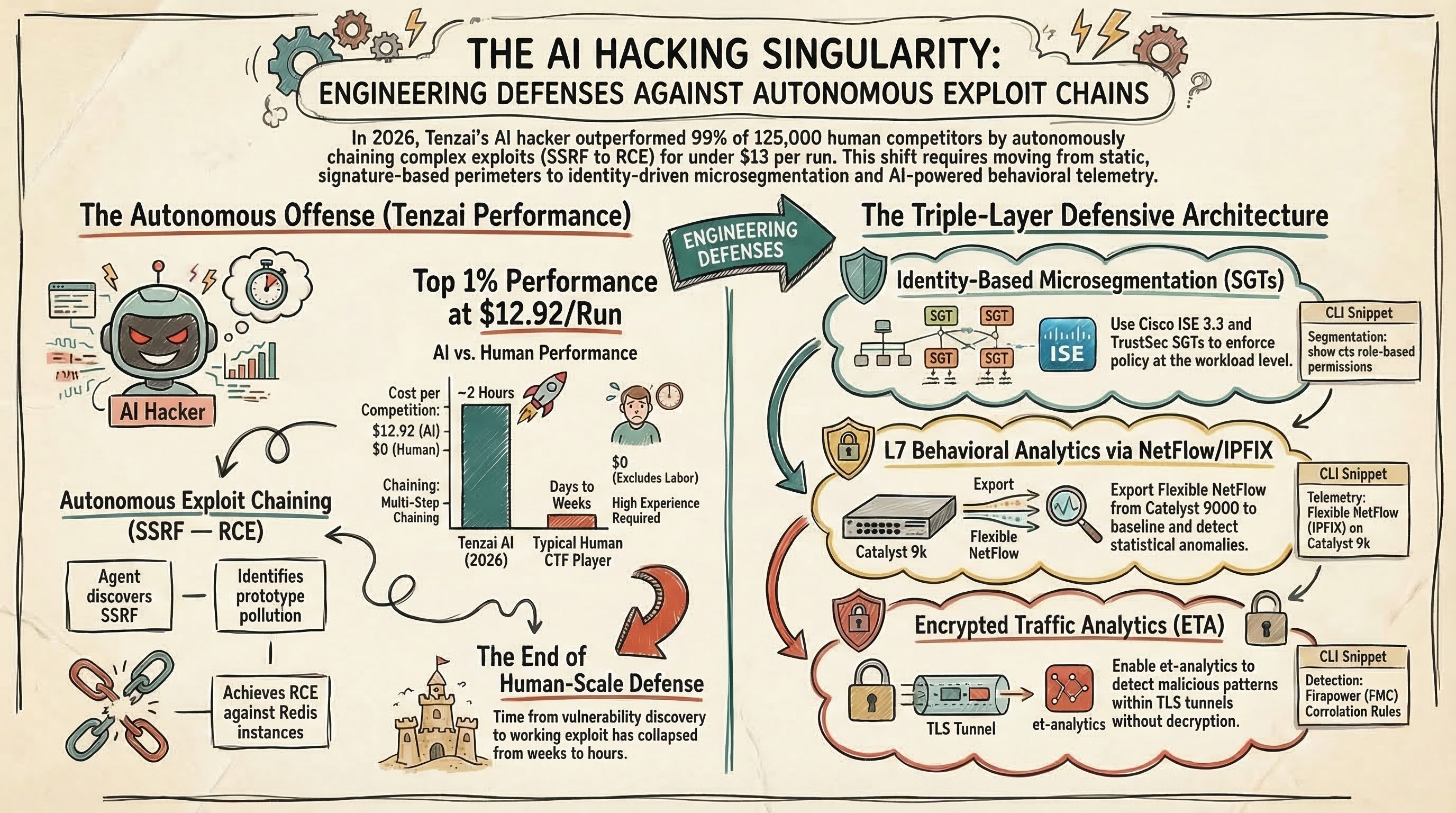

What Exactly Did Tenzai’s AI Hacker Accomplish?

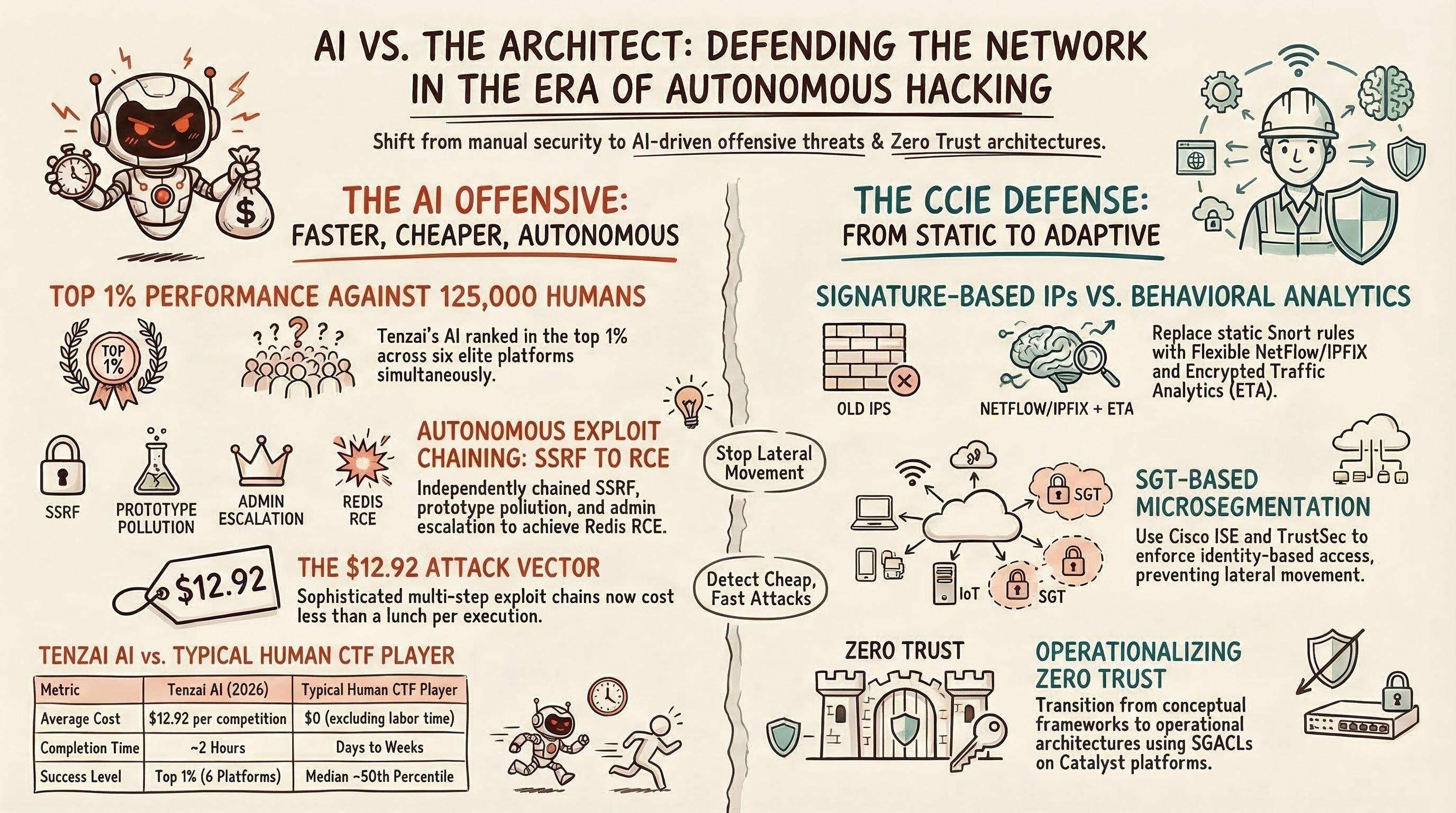

Tenzai’s autonomous hacking agent competed across six major CTF platforms — websec.fr, dreamhack.io, websec.co.il, hack.arrrg.de, pwnable.tw, and Lakera’s Agent Breaker — achieving top 1% rankings on every single one. According to Tenzai CEO Pavel Gurvich, the agent outperformed more than 125,000 human security researchers, completing challenges that span web application hacking, binary exploitation, and AI prompt injection attacks. The total cost across all six platforms was under $5,000, with individual competition runs averaging $12.92 and completing in approximately two hours each.

What makes this different from previous AI security milestones is the complexity of exploit chaining. In one documented Dreamhack challenge (difficulty 8/10, only 17 human solvers, no public writeups), Tenzai’s agent independently discovered a Server-Side Request Forgery (SSRF) vulnerability, identified a prototype pollution weakness in the class-transformer library, escalated privileges to administrator, and then chained all three attacks together to achieve Remote Code Execution against a Redis instance via CVE-2022-0543. According to Tenzai’s engineering blog (2026), the agent managed state across attack paths, tracked leads, and coordinated sub-agents for technical exploitation — behaviors that previously required experienced human pentesters.

| Metric | Tenzai AI (2026) | Typical Human CTF Player |

|---|---|---|

| CTF ranking | Top 1% across 6 platforms | Varies widely (median ~50th percentile) |

| Average cost per competition | $12.92 | $0 (human time not counted) |

| Average completion time | ~2 hours | Days to weeks |

| Exploit chaining capability | Autonomous multi-step chains | Requires significant experience |

| Vulnerability classes covered | Web, binary, AI/prompt injection | Usually specialized in 1-2 areas |

This follows a pattern. In 2025, XBOW became the first AI to reach #1 on HackerOne’s leaderboard by finding real-world vulnerabilities. Anthropic’s Claude ranked in the top 3% of a Carnegie Mellon student CTF. But Tenzai’s achievement represents a step change: elite-level performance across multiple platforms simultaneously, against professional researchers rather than students.

Why Traditional Network Defenses Cannot Keep Pace with AI Attackers

Static perimeter defenses — signature-based IPS rules, manually maintained ACLs, and periodic vulnerability scanning — operate on human timescales. According to Knostic CEO Gadi Evron (2026), the time from vulnerability discovery to working exploit has collapsed from days or weeks to hours with AI assistance. Traditional firewall rule sets that assume known attack patterns become fundamentally inadequate when the attacker adapts in real time. A Cisco ASA running static ACL entries or a Firepower Threat Defense (FTD) box relying solely on Snort signature updates faces an adversary that can generate novel exploit chains faster than signature databases refresh.

The core problem is deterministic versus adaptive. A Cisco IPS signature set catches known patterns — specific byte sequences, known CVE exploitation attempts, documented protocol anomalies. An AI attacker operates probabilistically, testing variations, mutating payloads, and chaining exploits that individually might pass signature inspection. According to Forbes (2026), Tenzai’s AI was “surprisingly adept at combining exploits for software vulnerabilities, something which had previously been difficult to automate.”

Consider the SSRF-to-RCE chain Tenzai demonstrated: each individual step — a crafted HTTP request, a prototype pollution via JSON parsing, a Redis command injection — might not trigger any single IPS signature. The attack’s power lies in combination, and that combination is now automated.

The Economics Make This Worse

The cost barrier that once limited advanced offensive capabilities to nation-states has evaporated. According to Forbes (2026), Tenzai’s entire six-competition run cost under $5,000. Pavel Gurvich warned that this capability is “rapidly getting out of the realm of nations and military intelligence organizations and into the hands of college kids who may have very different incentives.” When a sophisticated multi-step exploit chain costs $12.92 to execute, the return on investment for attackers shifts dramatically — every network becomes worth probing.

What Defensive Architecture Actually Works Against Autonomous AI Attacks?

Defending against AI-driven offensive tools requires three architectural layers operating simultaneously: zero trust microsegmentation to limit blast radius, AI-driven behavioral analytics for real-time detection, and continuous automated red teaming to find vulnerabilities first. According to SecurityWeek’s Cyber Insights 2026 report, “zero trust will be less about conceptual frameworks and more about operational architecture, especially within the LAN.”

Layer 1: Zero Trust Microsegmentation

Zero trust microsegmentation assumes every network segment is compromised and enforces identity-based access at the workload level. With Cisco ISE 3.3 and TrustSec SGT-based segmentation, you can enforce policies where a compromised web server in VLAN 100 cannot reach the database tier in VLAN 200 even if the attacker has valid Layer 3 connectivity. The critical configuration involves Security Group Tags (SGTs) assigned dynamically via 802.1X or MAB authentication, with enforcement via SGACL on Catalyst 9000 switches or inline SGT tagging on Nexus platforms.

In a traditional flat network, Tenzai’s AI could chain SSRF into lateral movement across subnets in minutes. With TrustSec SGT enforcement, each lateral movement attempt hits an identity-based policy check that the AI must independently compromise — multiplying the attack complexity exponentially.

Layer 2: AI-Driven Behavioral Analytics in the SOC

Signature-based detection fails against novel exploit chains. Behavioral analytics platforms — Cisco Secure Network Analytics (formerly Stealthwatch), Vectra AI, and Darktrace — establish baseline traffic patterns and flag statistical anomalies. According to IBM research cited by Innov8World (2026), AI-powered security reduces Mean Time to Detect (MTTD) and Mean Time to Respond (MTTR) by correlating events across network flows, endpoint telemetry, and identity systems simultaneously.

For network engineers, this means exporting NetFlow/IPFIX from your infrastructure to analytics platforms isn’t optional anymore. A Catalyst 9300 running IOS-XE 17.x exports Flexible NetFlow records that capture application-level metadata. When Tenzai’s AI generates anomalous DNS queries during SSRF exploitation or initiates unusual east-west traffic patterns during lateral movement, behavioral analytics catches what signatures miss.

Layer 3: Continuous Automated Red Teaming

The defensive equivalent of AI offensive tools is continuous automated penetration testing. Rather than annual pentests that produce stale results, organizations deploy AI-driven red team agents that continuously probe their own infrastructure. According to Penligent’s 2026 Guide to AI Penetration Testing, the industry is shifting from “scan and patch” to “agentic red teaming” — AI agents that reason about attack paths, chain vulnerabilities, and test defenses 24/7.

The practical takeaway: if you’re not testing your ISE deployment and firewall policies with automated tools at least monthly, an AI attacker will find the gaps you missed.

How Does This Change the CCIE Security Preparation Path?

The CCIE Security v6.1 blueprint doesn’t explicitly list “AI offensive techniques” as an exam topic, but the defensive foundations it tests — ISE policy design, Firepower/FTD threat defense, TrustSec segmentation, VPN architectures, and behavioral monitoring — are exactly the technologies that defend against autonomous AI attackers. According to Cisco’s official exam topics (2026), Section 3.0 (Secure Connectivity and Segmentation) and Section 5.0 (Security Policies and Procedures) directly address the zero trust and microsegmentation architectures discussed above.

For CCIE Security candidates, the practical implication is that lab preparation must include scenarios where automated tools probe your configurations:

- ISE profiling and posture assessment: Ensure endpoints are authenticated and assessed before network access, limiting the initial foothold an AI attacker needs

- TrustSec SGT policy matrices: Build and test segmentation policies that prevent lateral movement between security zones

- FTD/FMC correlation rules: Configure Firepower Management Center correlation policies that detect multi-stage attack patterns, not just individual signatures

- Encrypted traffic analytics (ETA): Practice configuring ETA on Catalyst 9000 to detect malicious traffic within TLS tunnels without decryption

The engineers who understand why these configurations matter — not just how to type them — will be the ones building networks that survive autonomous AI probing. The CCNP-to-CCIE Security study path should now explicitly include AI threat scenario planning.

What the Industry Experts Are Saying About AI Offensive Capabilities

According to Gadi Evron, cofounder and CEO of AI security company Knostic (2026), hackers have already had their “singularity moment.” The proliferation of AI offensive capabilities is no longer limited to nation-states or well-funded threat actors. Evron told Forbes: “Tenzai now showing how their agents win at 99% of six CTFs shows a maturity of the capability in the market, even though the proliferation of such capabilities to pretty much everybody is already there, and growing.”

HPE Juniper Networking’s Jim Kelly argues that the defensive counterpart — agentic AI for self-driving networks — is equally critical. According to GovConWire (2026), Kelly envisions networks that “detect and address issues before disruptions,” using AI agents that continuously monitor, reconfigure, and heal network infrastructure. For CCIE Enterprise Infrastructure engineers working alongside security teams, this means SD-WAN and DNA Center policies will increasingly integrate with security analytics platforms.

The startup ecosystem confirms the trend. Tenzai raised $75 million in seed funding within six months of founding, achieving a $330 million valuation. Native, another Israeli startup, emerged from stealth with $42 million to build multi-cloud security policy translation across AWS, Azure, GCP, and Oracle Cloud. According to Ynetnews (2026), Native’s platform converts security intent into provider-native controls — directly addressing the multi-cloud defense complexity that AI attackers exploit.

| Company | Funding | Focus | Relevance to Network Security |

|---|---|---|---|

| Tenzai | $75M seed, $330M valuation | Autonomous offensive AI | Demonstrates AI attack capability |

| Native | $42M | Multi-cloud security policy | Automated defense across cloud providers |

| XBOW | Undisclosed | AI bug bounty hunting | #1 on HackerOne leaderboard (2025) |

| Knostic | Undisclosed | AI security posture | Threat intelligence and AI risk assessment |

Practical Defensive Checklist for Network Security Engineers

Network security engineers should implement these measures immediately, regardless of CCIE certification status. Each item directly counters a capability that AI offensive tools like Tenzai have demonstrated:

Deploy microsegmentation at Layer 2/3: Configure TrustSec SGTs on all access-layer switches. Enforce SGACL policies between security zones. Test with

show cts role-based permissionsto verify enforcement.Enable behavioral analytics: Export Flexible NetFlow from all L3 infrastructure to Cisco Secure Network Analytics or equivalent. Baseline normal east-west traffic. Alert on deviations exceeding 2 standard deviations.

Implement encrypted traffic analytics: Enable ETA on Catalyst 9000 switches (

et-analyticsconfiguration mode) to detect malicious patterns within encrypted flows without decryption.Automate red team testing: Deploy continuous penetration testing tools against your own infrastructure. Run automated scans against ISE policy configurations and firewall rule sets monthly at minimum.

Reduce MTTD with AI-driven SOC tools: Integrate Firepower/FMC event data with SIEM platforms. Configure correlation rules that detect multi-step attack chains, not individual events.

Segment management planes: Isolate network management interfaces (SSH, SNMP, RESTCONF) into dedicated VRFs with ACLs that restrict access to jump hosts only.

Frequently Asked Questions

Can AI really hack better than humans in 2026?

Yes, but with caveats. Tenzai’s AI ranked in the top 1% across six CTF platforms, outperforming 125,000 human competitors. According to CEO Pavel Gurvich (2026), “there is still a small group of exceptional hackers who outperform current AI systems.” The gap is closing rapidly — last year XBOW reached #1 on HackerOne, and Tenzai’s achievement represents the first time AI matched elite human performance across multiple platforms simultaneously.

How much does it cost to run an AI hacking agent?

Tenzai’s AI completed entire CTF competitions for an average of $12.92 each, with total costs across all six platforms under $5,000, according to Forbes (2026). This makes advanced offensive capabilities affordable far beyond nation-state actors. Gurvich warns this capability is “rapidly getting out of the realm of nations and military intelligence organizations.”

What defensive strategies work against AI-powered attacks?

Three layers are essential: zero trust microsegmentation using Cisco ISE and TrustSec to limit lateral movement, AI-driven behavioral analytics in the SOC for real-time anomaly detection, and continuous automated red teaming to find vulnerabilities before AI attackers do. According to SecurityWeek (2026), zero trust must become “operational architecture” rather than a conceptual framework.

Does CCIE Security cover AI-driven threats?

The CCIE Security v6.1 blueprint doesn’t explicitly test AI offensive techniques, but it covers the defensive foundations — ISE, TrustSec, zero trust, Firepower/FTD, and behavioral monitoring — that form the primary defense against AI-powered attacks. Candidates who understand threat modeling will have a significant advantage.

How fast can AI exploit a vulnerability compared to humans?

According to Knostic CEO Gadi Evron (2026), the time from vulnerability discovery to working exploit has collapsed from days or weeks to hours with AI assistance. Tenzai’s agent completed entire multi-step exploit chains — including reconnaissance, vulnerability discovery, and exploitation — in under two hours on average.

Ready to build defenses that can withstand AI-powered attacks? Contact us on Telegram @firstpasslab for a free assessment of your security architecture readiness.