Nvidia’s networking division generated $31 billion in fiscal year 2026 revenue — $11 billion in Q4 alone — making a GPU company the largest data center Ethernet switch vendor on the planet. According to Nvidia’s Q4 FY2026 earnings report, networking revenue surged 267% year-over-year, and the division now generates more quarterly revenue than Cisco’s entire annual data center switching business. This isn’t a side project. Networking is now Nvidia’s second-largest business segment, and it’s reshaping who builds, sells, and operates data center networks.

Key Takeaway: The $7 billion Mellanox acquisition in 2020 has become the most consequential networking deal of the decade — a GPU company now dominates data center switching, and network engineers who understand GPU fabric design will command the highest-paying infrastructure roles in 2026 and beyond.

How Did Nvidia Build a $31 Billion Networking Business?

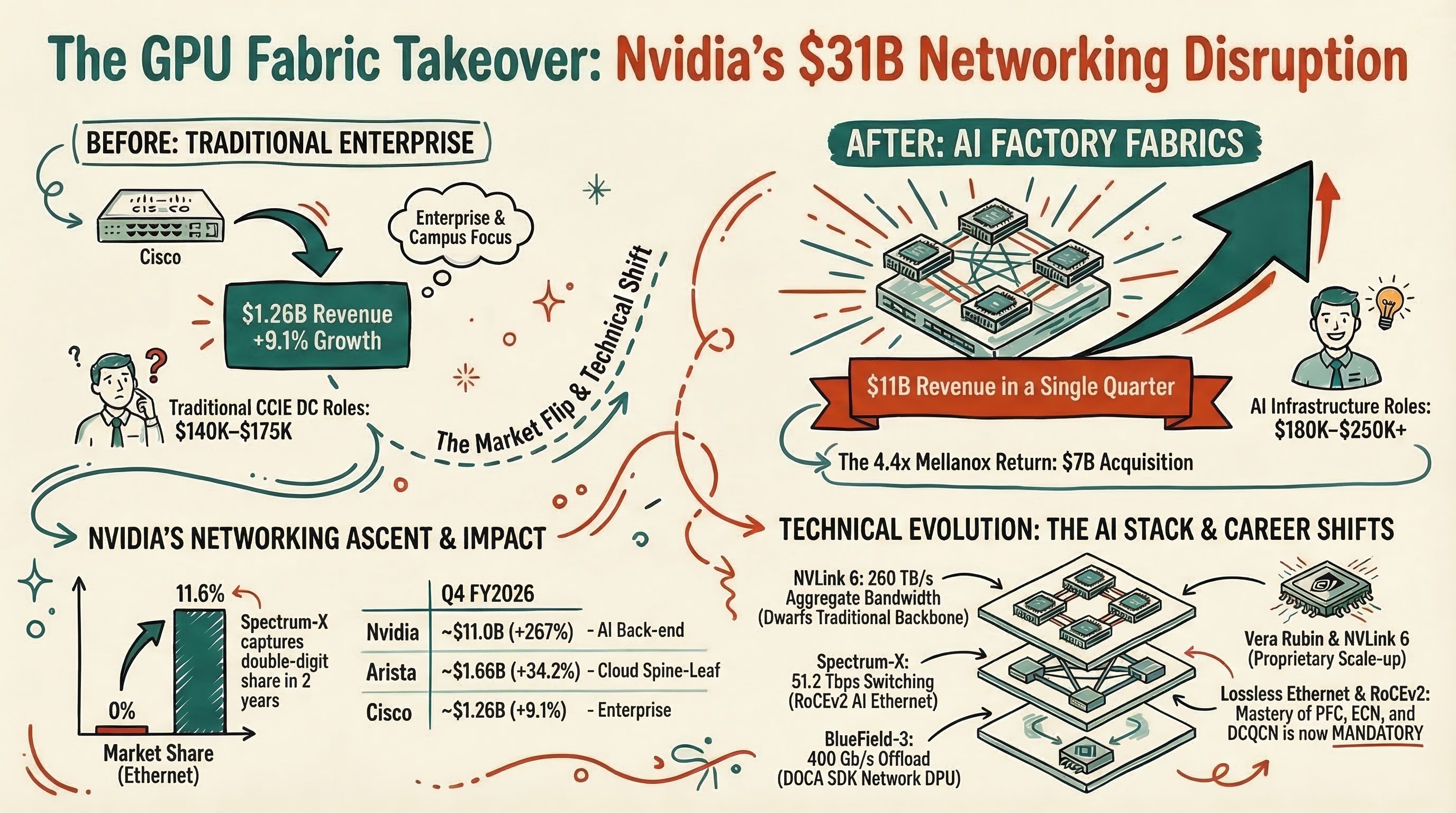

Nvidia’s networking division traces directly to the Mellanox acquisition completed in April 2020 for $7 billion — a deal that produced a 4.4x revenue return within six years. Mellanox brought InfiniBand switching, ConnectX network adapters, and deep expertise in RDMA (Remote Direct Memory Access) networking that Nvidia integrated into a full-stack AI infrastructure platform.

According to Kevin Cook, senior equity strategist at Zacks Investment Research, “Nvidia’s networking business reports $11 billion for the quarter; that number is greater than Cisco’s networking business, almost as big as the full-year estimates.” The division does in one quarter what Cisco’s data center networking does in a year.

The growth trajectory tells the story. Networking revenue climbed from $3.17 billion in Q1 FY2025 to $7.3 billion in Q2 FY2026, then $8.19 billion in Q3 FY2026 (162% YoY growth per Zacks), before hitting $11 billion in Q4. According to the Futurum Group analysis, Spectrum-X alone surpassed a $10 billion annualized run rate by mid-FY2026.

| Quarter | Networking Revenue | YoY Growth | Key Driver |

|---|---|---|---|

| Q1 FY2025 | $3.17B | +240% | InfiniBand demand |

| Q2 FY2026 | $7.3B | +100% | Spectrum-X ramp |

| Q3 FY2026 | $8.19B | +162% | 800GbE adoption |

| Q4 FY2026 | $11.0B | +267% | NVLink + CPO |

| Full FY2026 | $31B+ | — | Full-stack AI networking |

Kevin Deierling, Nvidia SVP of Networking (who joined through the Mellanox acquisition), told TechCrunch: “Jensen said this the first day when he acquired us — the data center is the new unit of computing. Networking is a lot more than just moving smaller amounts of data between a compute node; it’s actually a foundation.”

What Technologies Power Nvidia’s Networking Stack?

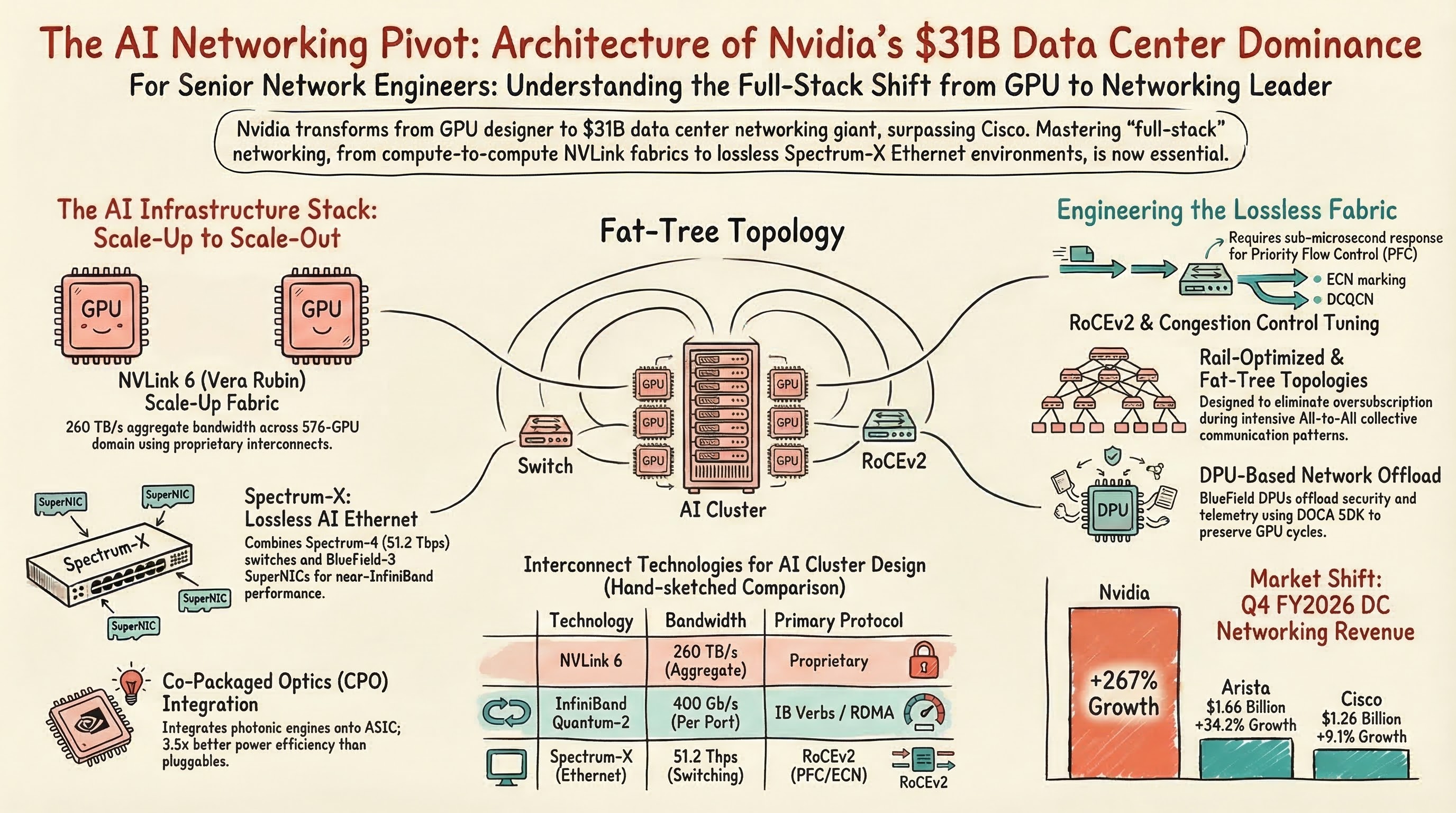

Nvidia’s networking portfolio spans four distinct technology layers — NVLink for GPU-to-GPU scale-up, InfiniBand for HPC scale-out, Spectrum-X for Ethernet-based AI training, and co-packaged optics for next-generation power efficiency. Each layer addresses a different bandwidth and latency requirement in the modern AI factory, and together they form what Nvidia calls a “full-stack” networking solution that no other vendor can match end-to-end.

NVLink: The GPU-to-GPU Backbone. NVLink 5 on Nvidia’s Blackwell architecture delivers 900 GB/s of unidirectional bandwidth per GPU — 9x more than the 100 GB/s available on the scale-out network via ConnectX-8 NICs, according to SemiAnalysis. The upcoming Vera Rubin platform announced at GTC 2026 pushes this to NVLink 6 with 260 TB/s aggregate bandwidth across a 576-GPU domain — more than the backbone capacity of some entire service provider networks.

InfiniBand Quantum: The HPC Standard. Nvidia’s Quantum InfiniBand platform dominates high-performance computing interconnects. Quantum-2 (NDR) switches deliver 400 Gb/s per port with sub-microsecond latency and in-network computing (SHARP) for collective operations. Government labs, financial HPC clusters, and early AI training deployments run InfiniBand because it provides deterministic latency that Ethernet historically couldn’t match.

Spectrum-X: Ethernet for AI at Scale. Spectrum-X combines Spectrum-4 switches (51.2 Tbps) with BlueField-3 SuperNICs to deliver lossless Ethernet performance approaching InfiniBand levels. Adaptive routing, enhanced congestion control (PFC + ECN + DCQCN), and RoCEv2 optimization made Spectrum-X the technology that convinced Meta to choose Ethernet over InfiniBand for its $135 billion AI infrastructure buildout. According to IDC data cited by Motley Fool, Nvidia now holds 11.6% of the data center Ethernet switch market — from essentially zero three years ago.

Co-Packaged Optics (CPO): The Power Efficiency Play. At GTC 2026, Nvidia unveiled Spectrum-X Ethernet Photonics with co-packaged optics built on 200G SerDes technology. According to Nvidia’s developer blog, CPO delivers 3.5x better power efficiency and 10x improved resiliency versus pluggable transceivers. When a single AI rack draws up to 600 kW and optical networking consumes 10% of that power envelope per Futurum Group analysis, CPO isn’t optional — it’s a prerequisite for scaling to million-GPU AI factories.

| Technology | Bandwidth | Use Case | Protocol |

|---|---|---|---|

| NVLink 6 (Vera Rubin) | 260 TB/s aggregate | GPU-to-GPU scale-up | Proprietary |

| InfiniBand Quantum-2 | 400 Gb/s per port | HPC, early AI training | IB verbs, RDMA |

| Spectrum-X (Spectrum-4) | 51.2 Tbps switching | AI Ethernet fabric | RoCEv2, PFC/ECN |

| Co-Packaged Optics | 102.4 Tb/s per switch | Next-gen scale-out | Photonic SerDes |

| BlueField-3 SuperNIC | 400 Gb/s | Network offload, DPU | DOCA SDK |

How Does Nvidia’s Rise Change the Competitive Landscape Against Cisco and Arista?

Nvidia has fundamentally disrupted the data center switching vendor hierarchy that Cisco and Arista dominated for two decades. According to NextPlatform analysis, Nvidia has “pulled far ahead of both Cisco and Arista” in data center Ethernet switch revenue, with Cisco reporting $1.26 billion (up 9.1%) and Arista at $1.66 billion (up 34.2%) in the same quarter that Nvidia posted $11 billion.

The market dynamics split cleanly into two segments. In traditional enterprise and campus networking, Cisco remains dominant — its Catalyst 9000 series, Meraki cloud management, and DNA Center automation platform serve the enterprise switching market that Nvidia has no interest in entering. Arista dominates cloud provider spine-leaf deployments with its EOS platform at hyperscalers like Microsoft and Meta (for non-AI traffic).

But in AI back-end networking — the GPU-to-GPU fabric that connects thousands of accelerators for model training — Nvidia owns the market. According to Dell’Oro Group’s 2026 predictions, “vendors with greater exposure to AI back-end networking significantly outperformed,” and 800 Gbps switch ports surpassed 20 million within three years of shipments.

The newly merged HPE-Juniper entity adds another competitor. HPE reported $2.7 billion in networking revenue in a single quarter after the $14 billion Juniper acquisition, but their strength lies in campus, enterprise, and some cloud networking — not AI-specific GPU fabrics.

Nvidia’s differentiation is the full-stack approach. As Deierling told TechCrunch: “I can’t think of other companies that have full-stack capabilities that we have. We build the full compute stack, fully integrated stack, and then we go to market through all of our partners.” Cisco sells switches. Arista sells switches with better software. Nvidia sells a GPU-to-network integrated system where the switching fabric is optimized specifically for the compute it connects.

| Vendor | Q4 FY2026 DC Revenue | YoY Growth | Primary Strength |

|---|---|---|---|

| Nvidia | ~$11.0B | +267% | AI back-end fabric (NVLink + Spectrum-X) |

| Arista | ~$1.66B | +34.2% | Cloud spine-leaf, EOS automation |

| Cisco | ~$1.26B (DC segment) | +9.1% | Enterprise, campus, SD-WAN |

| HPE-Juniper | ~$2.7B (total networking) | +152% | Enterprise, campus, cloud |

What Does Nvidia’s $4 Billion Optics Investment Signal for the Future?

Nvidia invested $4 billion in optical networking companies Lumentum and Coherent in early March 2026, signaling that photonics is the next critical bottleneck in AI infrastructure scaling. According to Forbes, these investments accelerate Nvidia’s transformation “from a chip company into an AI infrastructure conglomerate” that controls every layer of the compute stack — GPUs, networking switches, DPUs, system software, and now optical interconnects.

The power math drives the urgency. AI data center racks draw 600 kW each, and pluggable optical transceivers consume approximately 10 watts per 800G port — totaling 10% of rack power at scale. Nvidia’s co-packaged optics technology integrates photonic engines directly onto the switch ASIC package, eliminating the pluggable transceiver entirely. The result: 3.5x power reduction and 10x resiliency improvement per Nvidia’s specifications.

For context, the Spectrum-6 SPX Network Rack announced at GTC 2026 delivers 102.4 Tb/s switching capacity with co-packaged optics — that’s the equivalent of approximately 128 ports at 800 Gbps on a single switch ASIC, powered optically without a single pluggable module. The STMicro PIC100 silicon photonics platform we previously covered targets similar 800G/1.6T integration for competing vendors, but Nvidia’s vertical integration gives them a deployment timeline advantage.

The Vera Rubin generation (shipping late 2026 into 2027) pairs the LP40 LPU with BlueField-5 and CX10 NICs connected through Nvidia Kyber — supporting both copper and co-packaged optics for scale-up, with Spectrum-class optical scale-out. This represents a complete optical networking platform from a company that sold zero networking products before 2020.

What Skills Do Network Engineers Need for Nvidia’s AI Networking Era?

Network engineers who understand GPU fabric design, lossless Ethernet tuning, and RDMA networking will command the highest-paying data center infrastructure roles in 2026. AI data center network architect positions pay $180,000-$250,000+ according to LinkedIn job postings for companies building large-scale GPU clusters — a significant premium over traditional CCIE Data Center roles averaging $140,000-$175,000.

The technical skill gap is specific and addressable:

Lossless Ethernet and RoCEv2 Configuration. Priority Flow Control (PFC), Explicit Congestion Notification (ECN), and DCQCN congestion control are the foundations of RDMA over Converged Ethernet v2. Traditional data center engineers configure QoS for VoIP and storage; AI fabrics require sub-microsecond PFC response times across thousands of switch hops. CCIE Data Center candidates should practice PFC watchdog timers, ECN marking thresholds, and buffer allocation on Nexus 9000 platforms.

GPU Fabric Topology Design. AI clusters use fat-tree or rail-optimized topologies with specific oversubscription ratios designed for all-to-all collective communication patterns (AllReduce, AllGather). Unlike traditional north-south traffic patterns, GPU training generates east-west traffic that saturates every link simultaneously. Understanding how VXLAN EVPN integrates with or gives way to Spectrum-X adaptive routing in AI pods is increasingly relevant.

InfiniBand Fundamentals. Subnet managers, LID-based forwarding, adaptive routing, and SHARP in-network computing remain relevant for HPC and high-end AI training clusters. While Ethernet is winning new deployments, thousands of existing InfiniBand clusters need management and migration planning.

Co-Packaged Optics and Power Budgets. Understanding optical power budgets, reach limitations, and thermal constraints of co-packaged optics versus pluggable transceivers is becoming essential for data center design roles. When an Nvidia Spectrum-6 switch eliminates pluggable modules entirely, the cabling and patch panel design changes fundamentally.

DPU and SmartNIC Programming. BlueField DPUs offload networking functions (firewalling, encryption, telemetry) from the host CPU to dedicated network processors. Nvidia’s DOCA SDK is the primary programming model, and network engineers who can configure BlueField for zero trust microsegmentation at the NIC level add significant value to AI infrastructure teams.

| Skill | Traditional DC | AI DC (Nvidia) | Premium |

|---|---|---|---|

| QoS/Lossless Ethernet | Basic DSCP/CoS | PFC/ECN/DCQCN for RoCEv2 | High |

| Topology Design | Spine-leaf, VPC | Fat-tree, rail-optimized | High |

| Network Monitoring | SNMP, streaming telemetry | GPU-aware telemetry, job completion time | Medium |

| Security | ACL, ZBFW | DPU-based microsegmentation | High |

| Optics | Pluggable SFP/QSFP | Co-packaged photonics | Emerging |

How Should CCIE Candidates Position for This Shift?

CCIE Data Center v3.1 candidates should treat Nvidia’s networking stack as required knowledge alongside Cisco ACI and NX-OS VXLAN EVPN. The exam blueprint doesn’t test Spectrum-X directly, but the underlying protocols — RoCEv2, PFC, ECN, VXLAN, BGP EVPN — are foundational to both Cisco and Nvidia data center fabrics. An engineer who passes CCIE DC and can articulate how Cisco NDFC provisions VXLAN EVPN AND how Spectrum-X implements adaptive routing over the same Ethernet fabric is dramatically more valuable than one who knows only traditional switching.

The career path increasingly forks between “enterprise data center” (Cisco ACI, NX-OS, traditional workloads) and “AI data center” (Nvidia Spectrum-X, GPU fabrics, training clusters). Both pay well, but AI data center roles are growing faster and paying more. According to industry job postings, AI infrastructure teams at hyperscalers and GPU cloud providers (CoreWeave, Lambda, Together AI) list Nvidia networking experience as a preferred qualification alongside CCIE certification.

For CCIE Enterprise Infrastructure candidates, the connection is through SD-WAN and campus networks that feed AI workloads — understanding how traffic engineering and WAN optimization support AI model distribution across multiple data center sites. CCIE Security candidates benefit from understanding DPU-based security models that protect AI clusters at wire speed without consuming GPU cycles.

The Bigger Picture: Consolidation Meets Disruption

The networking industry is experiencing simultaneous consolidation and disruption. The $14 billion HPE-Juniper merger consolidates traditional enterprise networking. Google’s $32 billion Wiz acquisition consolidates cloud security. Meanwhile, Nvidia disrupts from the compute side — a GPU company that now outsells every traditional networking vendor in the data center.

This pattern mirrors what happened in server networking 15 years ago. When VMware’s vSwitch and later NSX moved networking into software, physical switch vendors adapted by moving up the stack. Now Nvidia is moving down from GPUs into networking, and the question for Cisco and Arista isn’t whether they lose the AI back-end market — they already have — but whether AI networking architectures eventually influence enterprise and campus designs.

For network engineers, the practical takeaway is diversification. A CCIE certification proves you can design and troubleshoot complex networks. Adding Nvidia’s ecosystem knowledge — even at a conceptual level — proves you understand where those networks are heading. The engineers who thrive in 2027 and beyond will speak both languages: traditional Cisco/Arista enterprise networking AND Nvidia’s GPU-centric AI infrastructure.

Frequently Asked Questions

How much revenue does Nvidia’s networking division generate?

Nvidia’s networking division reported $11 billion in Q4 FY2026 revenue, representing 267% year-over-year growth. Full-year FY2026 networking revenue exceeded $31 billion, making it Nvidia’s second-largest business segment behind compute GPUs. According to Zacks Investment Research analyst Kevin Cook, Nvidia’s networking division generates more revenue in one quarter than Cisco’s data center networking business produces in a full year.

Has Nvidia surpassed Cisco in data center networking?

Yes, in data center Ethernet switching specifically. According to NextPlatform and IDC data, Nvidia now leads data center Ethernet switch sales by revenue, with 11.6% market share captured in approximately two years through its Spectrum-X platform. Cisco remains dominant in campus, enterprise edge, and SD-WAN markets. The split reflects the growing bifurcation between traditional enterprise networking and AI-specific GPU fabric networking.

What technologies make up Nvidia’s networking stack?

Nvidia’s full-stack networking includes NVLink for GPU-to-GPU scale-up (260 TB/s on Vera Rubin), InfiniBand Quantum switches for HPC interconnects, Spectrum-X Ethernet switches for AI training fabrics, BlueField DPUs for network offload and security, and co-packaged optics for power-efficient optical interconnects. The $7 billion Mellanox acquisition in 2020 formed the foundation for this portfolio.

Should CCIE candidates learn Nvidia networking technologies?

Absolutely. While the CCIE DC v3.1 exam tests Cisco-specific platforms, the underlying protocols (RoCEv2, PFC, ECN, VXLAN, BGP EVPN) are identical across Cisco and Nvidia fabrics. AI data center architect roles requiring both CCIE and Nvidia networking knowledge pay $180K-$250K+ — a significant premium. The engineers who combine CCIE credential depth with GPU fabric understanding will command the highest market rates.

What is co-packaged optics and why does Nvidia invest in it?

Co-packaged optics (CPO) integrates photonic engines directly onto the switch ASIC package, eliminating pluggable transceiver modules. Nvidia’s CPO delivers 3.5x power efficiency improvement and 10x resiliency improvement versus pluggables. With AI racks drawing up to 600 kW and optics consuming 10% of power budgets, CPO is essential for scaling to million-GPU AI factories. Nvidia’s $4 billion investment in Lumentum and Coherent secures its optical supply chain.

Ready to fast-track your CCIE journey? Contact us on Telegram @firstpasslab for a free assessment.